04 / 04

Hearing Space

Project brief

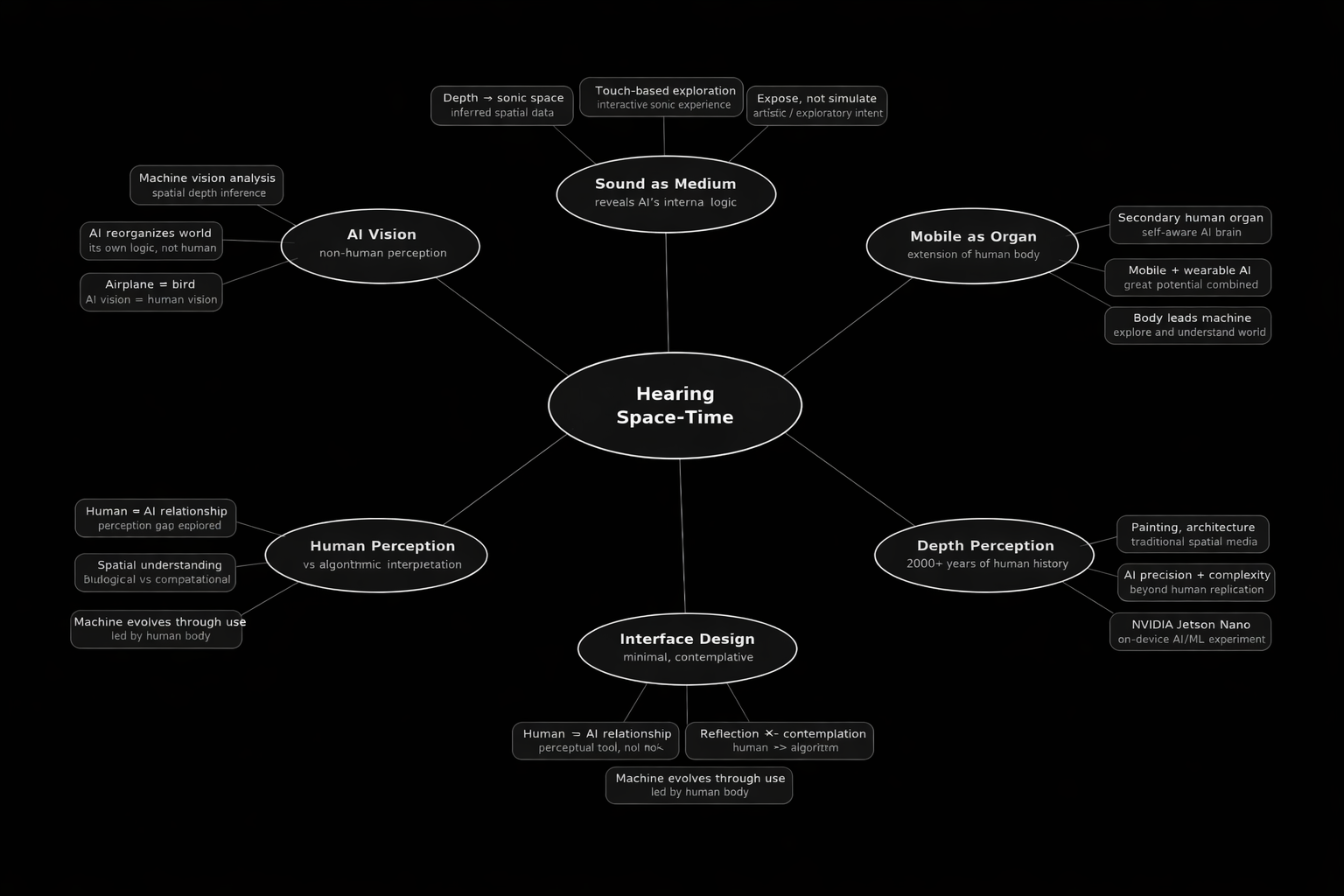

The app treats the mobile device as a perceptual instrument rather than a passive screen. Sound becomes a medium through which machine vision's internal logic is revealed, inviting reflection on the relationship between human perception, algorithmic interpretation, and spatial understanding. Hearing Space-Time is designed as an artistic and exploratory experience. It does not aim to simulate reality, but to expose how artificial intelligence reconstructs and reorganizes the world through inference.

- AI-based image analysis using machine vision

- Interactive sound generation based on inferred spatial depth

- Touch-based exploration of image-derived sonic space

- A minimal, contemplative interface designed for artistic engagement

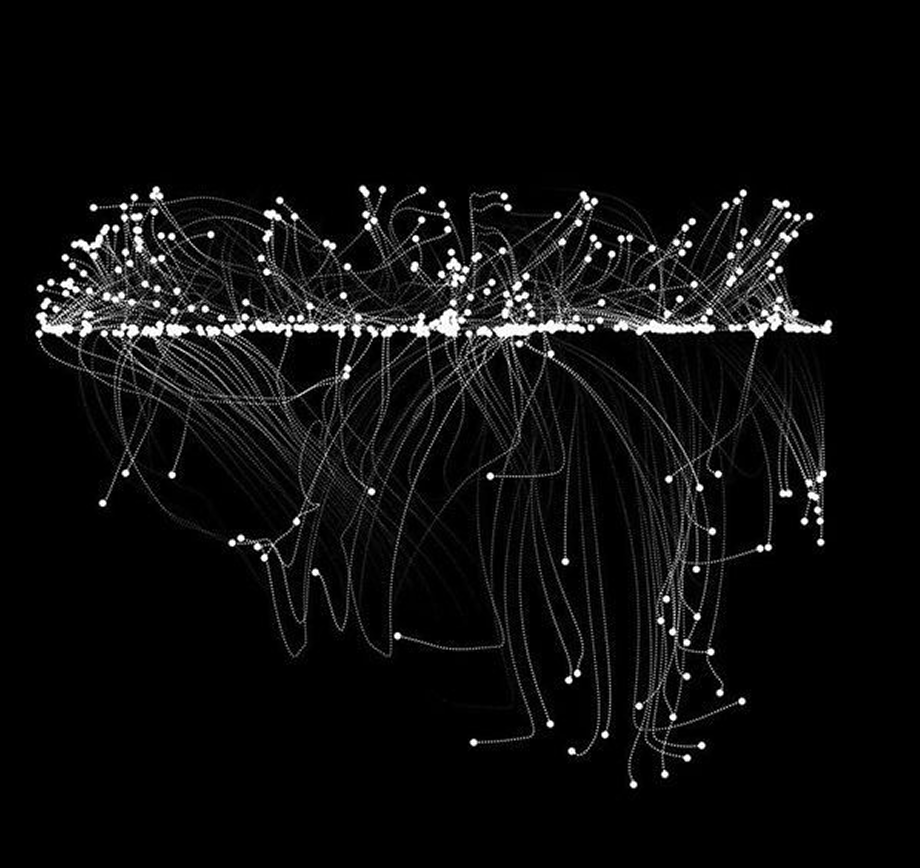

Environment ———————— using Hearing Space

S E G M E N T

Listen by objects

Detect objects in your photo. Tap each region to hear its sound.

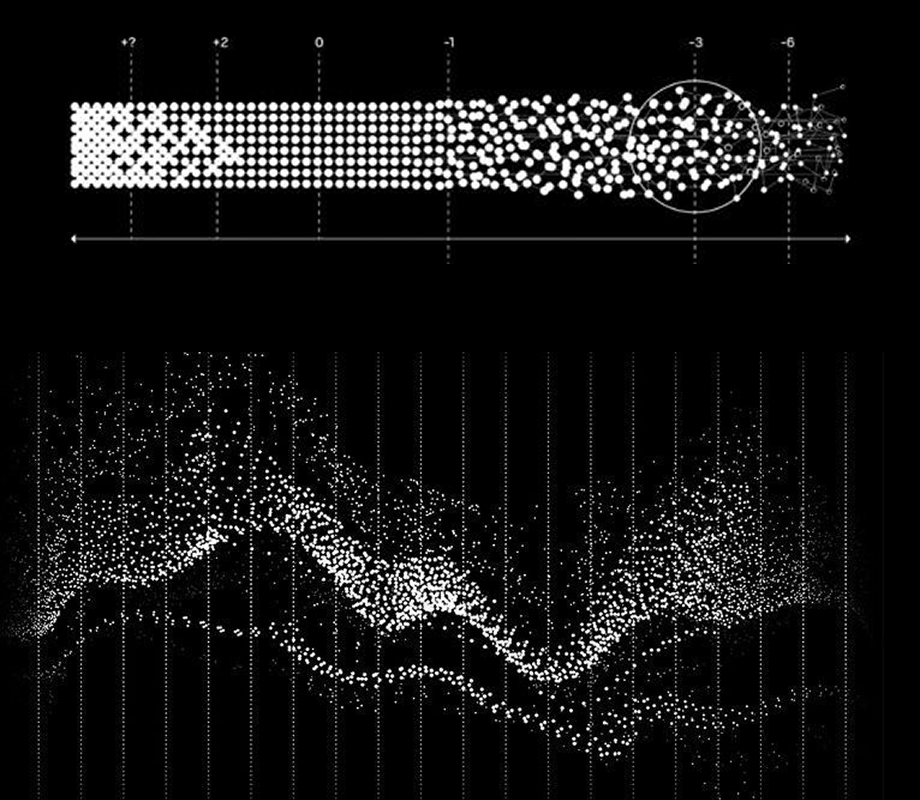

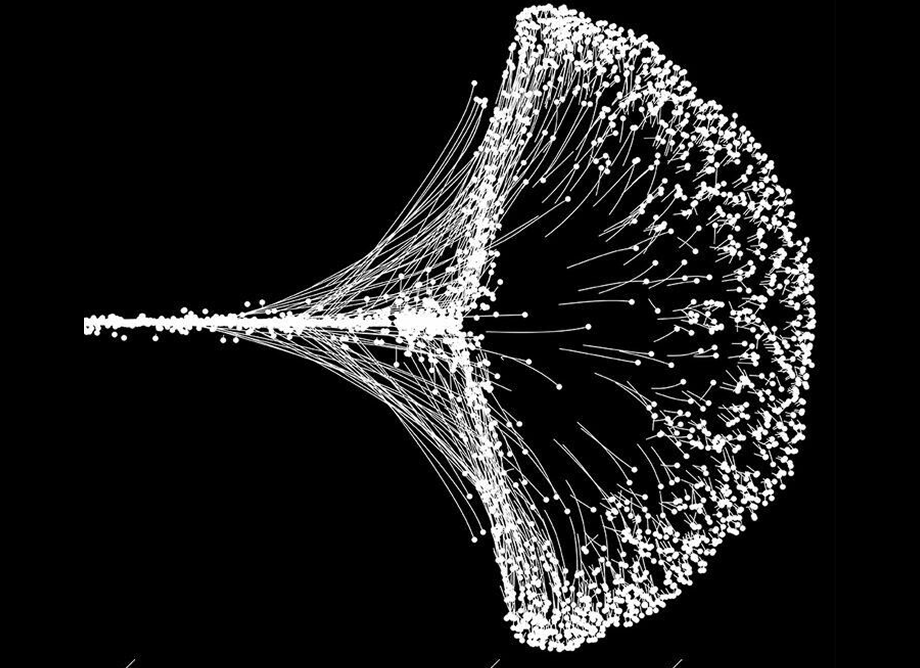

D E P T H

Listen by distance

Sense spatial depth in your photo. Tap different depths to hear space.

what are we experimenting

Human beings have explored the depth of space for more than 2,000 years in media such as painting and architecture. Using algorithms and input data, AI can achieve precision and complex results that humans could never replicate on their own. However, the vision of AI does not have to be the same as human vision. We need a tool to help us fly, so we invented the airplane, but the airplane does not need to be the same as a bird. This becomes a very interesting thing of AI vision because it has its own way to look at our world.

On the other hand, as mobile devices and wearable devices have become extensions of the human body and intelligence, combining them with AI will undoubtedly have great potential. The human body leads the mobile machine to explore the world, understand the world, and finally let it evolve. A mobile phone equipped with an AI brain is no longer just a pre-programmed machine, but a secondary human organ with self-awareness and an autonomous system.

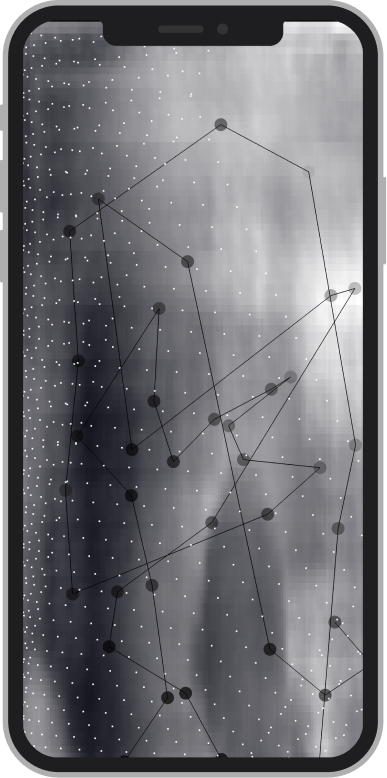

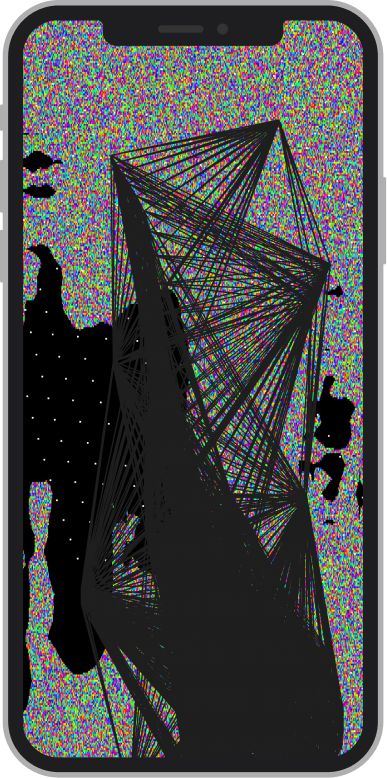

visual effect attempt (developing)

Partnering with Jing, we aimed to bridge the gap between cutting-edge tech and musical soul. For me, the ultimate challenge was aligning the aesthetics with our functional goals. Much of my creative process involved translating abstract AI concepts into a tangible visual experience. After numerous rounds of testing and refinement, we've arrived at this latest iteration — and the journey of development continues.

improvements

User feedback has been incredibly positive regarding the overall visual direction, yet we recognize key areas for refinement. To enhance the sense of space and immersion, we are addressing the monochrome fade issues experienced during interaction. Furthermore, we are deepening the UI detail to amplify the app's futuristic, high-tech aesthetic, ensuring every pixel serves a purpose.

Live on the App Store. Currently in active development — iterating on visual depth and spatial sound interaction.